Improving the Large Language Models (LMMs) Systems for Underrepresent Languages

DOI:

https://doi.org/10.36829/63CTS.v12i2.1785Keywords:

Indigenous language documentation, generative AI, Reinforcement Learning from Human Feedback (RLHF), language revitalizationAbstract

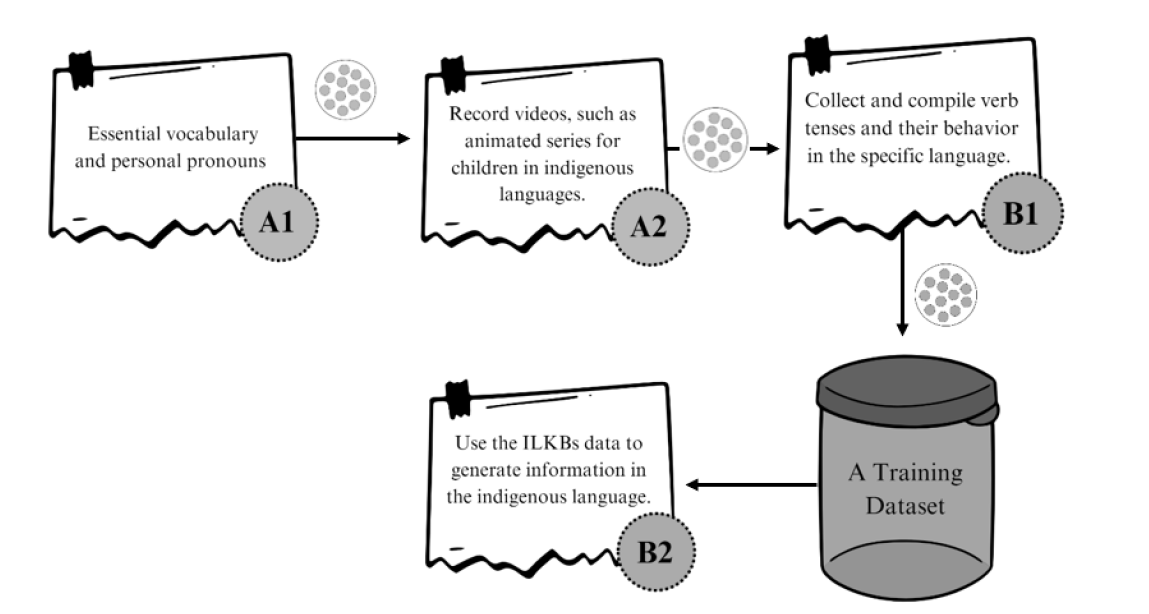

According to Garcés, F. (2020) a total of 7000 languages are spoken in the world, half of which are believed to disappear by the end of the 21st century. The generative AI data engine of many AI companies has scarce data on indigenous languages, as many of them have no presence on the Internet (Kshetri, N., 2024). The present study presents a knowledge-based system that provides a stable knowledge base in indigenous languages that can be further used by Reinforcement Learning from Human Feedback (RLHF) to add indigenous languages to AI systems.

Downloads

References

Aguilera, M., & Mariscal-Sánchez, L. (n.d.). Sochiapam Chinantec Living Dictionary. Living Tongues Institute for Endangered Languages; Municipalidad de San Pedro Sochiapam. Retrieved October 31, 2025, from https://livingdictionaries.app/sochiapam-chinantec

Aguilera, M., Morales, J., Vargas, C., Castillo, E., & Ramírez, A. (n.d.). Honduras Garifuna Living Dictionary. Living Tongues Institute for Endangered Languages; Instituto Hondureño de Ciencia, Tecnología y la Innovación. Retrieved October 31, 2025, from https://livingdictionaries.app/honduras-garifuna

Aguilera, M., Simeón-Martínez, A., & Rafael-Palma, A. (n.d.). Pech Living Dictionary. Living Tongues Institute for Endangered Languages. Retrieved October 31, 2025, from https://livingdictionaries.app/pesh

Álvarez-Gayou, J. L. (2003). Cómo hacer investigación cualitativa. Fundamentos y metodología.

Barnes, R. W., Grove, J. W., & Burns, N. H. (2003). Experimental assessment of factors affecting transfer length. Structural Journal, 100(6), 740-748.

Bird, S. (2024, August). Must NLP be extractive? In Proceedings of the 62nd Annual Meeting of the Association for Computational Linguistics (ACL 2024) (pp. 14915–14929). Association for Computational Linguistics.

Bouquiaux, L., & Thomas, J. (1992). Studying and describing unwritten languages. SIL International.

Garcés, F. (2020). La revitalización de las lenguas indígenas del Ecuador: una tarea de todos.

García García, A., Fabiano, E., & O'Hagan, Z. (2016). Taushiro Field Materials, 2016-09 [Collection identifier]. California Language Archive, Survey of California and Other Indian Languages, University of California, Berkeley. http://dx.doi.org/doi:10.7297/X20V89ZG

Go, Y., Ying, Y., Sunjaya, M., & Saragih, J. C. F. (2023). The utilization of Little Fox Chinese video in learning Mandarin vocabulary for elementary school students. In E3S Web of Conferences (Vol. 426, p. 02012). EDP Sciences.

Google. (2024). Google Ngram Viewer: 1800-2019. Retrieved May 27, 2024, from https://books.google.com/ngrams

Hernández Sampieri, R. (2018). Metodología de la investigación: Las rutas cuantitativa, cualitativa y mixta (6.ª ed.). McGraw-Hill.

Kshetri, N. (2024). The academic industry’s response to generative artificial intelligence: An institutional analysis of large language models. Telecommunications Policy, 48(5), 102760.

Little Fox. (n.d.). Series [Web page]. Retrieved October 31, 2025, from https://chinese.littlefox.com/en/story

Merriam, S. B., & Grenier, R. S. (Eds.). (2019). Qualitative research in practice: Examples for discussion and analysis. John Wiley & Sons.

Midjourney. (2024). AI-generated image depicting linguistic fieldwork during indigenous language documentation [Image]. Midjourney CDN. https://cdn.midjourney.com/e4c88a19-2561-4e1c-b142-0d0b6b48843f/0_2.png

MidJourney. (2025). Untitled image generated using the prompt: “a futuristic indigenous linguist documenting endangered languages, cinematic lighting, hyper-realistic, 8k, national geographic documentary style.” [AI-generated image]. Retrieved October 31, 2025, from https://cdn.midjourney.com/ee0bd8db-5b1e-4d0a-81f2-c0c62617e2f8/0_3.png

Ouyang, L., Wu, J., Jiang, X., Almeida, D., Wainwright, C. L., Mishkin, P., Zhang, C., Agarwal, S., Slama, K., Ray, A., Schulman, J., Hilton, J., Kelton, F., Miller, L., Simens, M., Askell, A., Welinder, P., Christiano, P., Leike, J., & Lowe, R. (2022). Training language models to follow instructions with human feedback. arXiv. https://doi.org/10.48550/arXiv.2203.02155

Philip Tim Palacio, Chundra Cathcart, I-Hsuan Chen, Emily Cibelli, Kristin Hanson, Shinae Kang, Lev Michael, Eric Prendergast, Christine Sheil, Tammy Stark, & Elise Stickles. (2020). Berkeley Field Methods: Garifuna, 2020-06 [Collection identifier]. California Language Archive, Survey of California and Other Indian Languages, University of California, Berkeley. http://dx.doi.org/doi:10.7297/X2RX99FN

Rayson, P. (2008). From key words to key semantic domains. International Journal of Corpus Linguistics, 13(4), 519-549.

Scale AI., Inc. (n.d.). Reinforcement learning from human feedback (RLHF). In Large language models. Retrieved from https://scale.com/guides/large-language-models#reinforcement-learning-from-human-feedback-(rlhf)

Downloads

Published

How to Cite

Issue

Section

License

Copyright (c) 2026 ALEX MANUEL MARTINEZ AGUILERA

This work is licensed under a Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International License.

El autor que publique en esta revista acepta las siguientes condiciones:

- El autor otorga a la Dirección General de Investigación el derecho de editar, reproducir, publicar y difundir el manuscrito en forma impresa o electrónica en la revista Ciencia, Tecnología y Salud.

- La Direción General de Investigación otorgará a la obra una licencia Creative Commons Atribución-NoComercial-CompartirIgual 4.0 Internacional

Funding data

-

Scale AI

Grant numbers OTS Multimodal Project